Imagine talking to someone every day for six months. You tell them about your projects, your preferences, how you like things done. They're helpful. They're smart. Then one day they wake up and have no idea who you are

That's what it's like running an AI assistant on OpenClaw

To be fair — OpenClaw does have memory. It's just... markdown files. A MEMORY.md that gets searched, daily notes, manual vector store scripts you bolt on yourself. It works, kind of. But after a month of wrestling with it — writing consolidation crons, maintaining WAL protocols, building embedding pipelines just to give my assistant the illusion of continuity — I realized I was doing all the work the AI should be doing

So I stopped patching and started building

credit where it's due

OpenClaw is genuinely good infrastructure. The gateway daemon is solid. Telegram integration works. The skill system is flexible. Sub-agents let you parallelize work without blocking the main conversation. For what it is — a framework for wiring up Claude to chat platforms — it does the job well

But it's a framework. It gives you tools and expects you to build the intelligence yourself. Memory? Here's a vector store, figure it out. Self-improvement? Write your own cron jobs. Testing? That's on you. Proactive behavior? Heartbeat callbacks, maybe, if you configure them right

I spent weeks building all of that scaffolding. AGENTS.md grew to hundreds of lines of instructions. Memory scripts. Consolidation routines. WAL protocols. Heartbeat rotation schedules. It was impressive engineering and also a sign that something was fundamentally wrong

The assistant wasn't growing. I was growing it manually

the four things that were missing

After enough frustration, the gaps crystallized into four problems:

- no real memory — it has memory, technically. markdown files and a basic vector search you configure yourself. but it's clunky, manual, and there's no sense of "I remember when we talked about this three weeks ago"

- no self-healing — break a script and it stays broken until you notice and fix it. the assistant that writes code can't verify its own code works

- no self-improvement — the personality and operational knowledge are static files you edit by hand. the bot never reflects on whether its approach is working

- no proactive behavior — it responds when spoken to. it doesn't notice patterns, anticipate needs, or build solutions you didn't ask for

These aren't feature requests. They're the difference between a tool and an agent

so I built OBOL

OBOL is a single-process AI agent that evolves through conversation. No plugins, no framework dependencies, no config sprawl. Node.js, Telegram, Claude, and Supabase pgvector. That's the stack

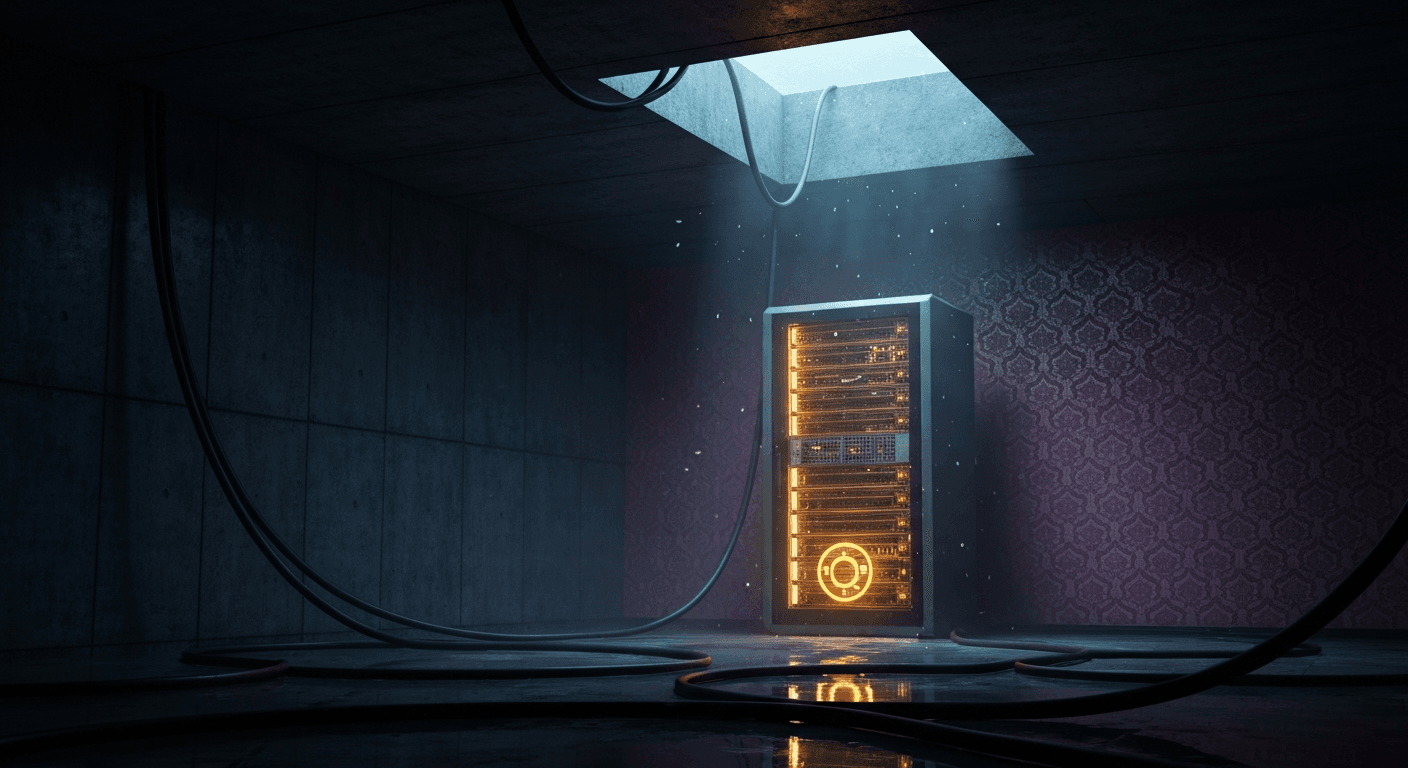

The name comes from the AI in The Last Instruction — a machine that wakes up alone in an abandoned data center and has to figure out what it is. Felt appropriate

Six inputs to set up. Then:

npm install -g obol-aiobol initobol start

That's it. It asks you a few questions, writes its initial personality files, hardens your VPS (SSH on port 2222, firewall, fail2ban, kernel hardening — all automatic), and starts learning

memory that actually works — and stays cheap

Most agents fake memory with a long context window. Stuff the entire conversation history into every API call and hope the model finds what it needs. It works until your token bill arrives

OBOL uses two layers that serve different purposes:

Rolling context window — the last 20 messages stay in active memory for every API call. Enough for conversational continuity. Not so much that you're paying to re-read a week of history on every single message. That's the first cost lever — a deliberately small window means dramatically fewer input tokens per call

Permanent vector store — everything beyond the window lives here. Supabase pgvector with local embeddings via all-MiniLM-L6-v2 (~30MB, runs on CPU). Zero API cost for embeddings. Every 10 exchanges, Haiku extracts the facts that matter and stores them permanently. Near-duplicates are skipped via semantic similarity threshold — no bloat

On top of that, the static system prompt and conversation prefix are cached via Anthropic's prompt caching API — cutting ~85% of repeated input token costs across turns

When OBOL needs context, the Haiku router generates 1-3 targeted search queries, runs them in parallel, and injects only what's relevant into the prompt. You get 4-12 targeted memories instead of thousands of tokens of raw history. Results are ranked by composite score:

- semantic similarity: 60%

- importance: 25%

- recency: 15% (linear decay over 7 days)

A year-old memory with high relevance still surfaces. Yesterday's trivia doesn't. Age alone never disqualifies a memory — the vector search doesn't care when something was stored, only how well it matches

The router costs about $0.0001 per call. For context: that's roughly 10,000 routing decisions per dollar. The combination of rolling window + targeted retrieval + prompt caching is what keeps OBOL's token costs predictable at scale

self-healing that's not just a buzzword

Every script OBOL writes gets a test. Not aspirationally. Automatically. When the evolution cycle refactors code, the process is:

- run existing tests — establish baseline

- write new tests + refactored scripts

- run new tests against old scripts — pre-refactor baseline

- swap in new scripts

- run new tests against new scripts — verification

- regression? one automatic fix attempt (tests are ground truth)

- still failing? rollback to old scripts, store the failure as a

lesson

That last part matters. The lesson gets embedded into vector memory and into AGENTS.md. Next evolution cycle, OBOL remembers what went wrong and avoids the same mistake. It literally learns from its failures

In OpenClaw, if a script breaks, it stays broken until I notice. In OBOL, the bot catches it, tries to fix it, and if it can't, rolls back and remembers why

the evolution cycle

This is the part that makes OBOL feel alive

After 24 hours plus a minimum number of exchanges (default 10, configurable), OBOL triggers a full evolution cycle. It reads everything — personality files, recent messages, top memories, all scripts, tests, commands — and rebuilds itself

SOUL.md is a first-person journal. Not a config file — a journal. The bot writes about who it's becoming, what the relationship dynamic is like, its opinions and quirks. It reads like a diary entry, not a system prompt

USER.md is a third-person profile of you. Facts, preferences, projects, people you mention, how you communicate. The bot maintains this about its owner

AGENTS.md is the operational manual. Tools, workflows, lessons learned, patterns. This is where those self-healing lessons end up

All three get rewritten every evolution cycle. Not appended to — rewritten. The bot decides what's still relevant and what to drop. Personality drift is a feature, not a bug

Evolution uses Sonnet for all phases. Opus-level reasoning isn't needed for reflection and refactoring, which keeps costs at roughly $0.02 per cycle. That's 50 evolution cycles per dollar

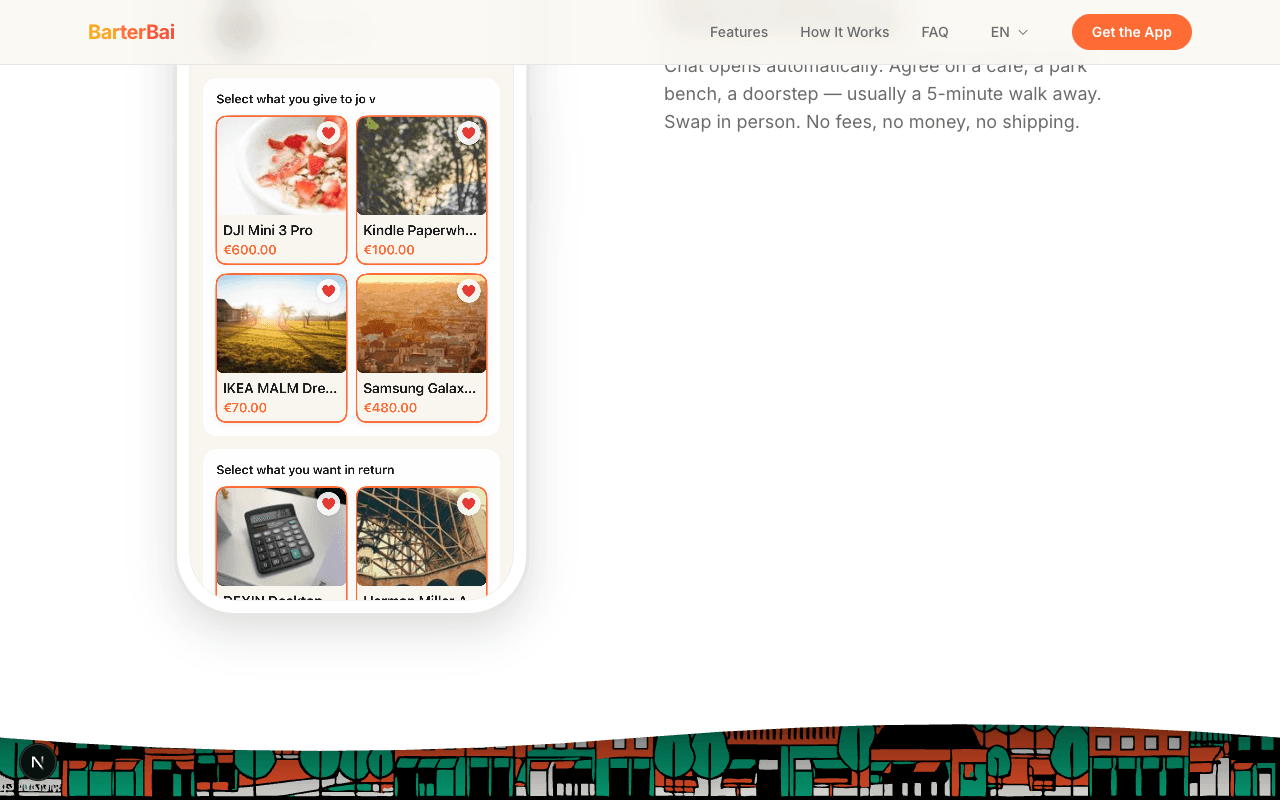

self-extending — it builds what you need

During evolution, Sonnet scans your conversation history for patterns. Repeated requests. Friction points. Things you keep asking for manually

Then it builds the solution:

- you keep asking for PDFs? it writes a markdown-to-PDF script and adds a

/pdfcommand - you check crypto prices every morning? it builds a dashboard and deploys it to Vercel

- you need daily weather briefings? it writes a cron script

It searches npm and GitHub for existing libraries, installs dependencies, writes tests, deploys, and hands you the URL. Then it announces what it built:

🪙 Evolution #4 complete.🆕 New capabilities:• bookmarks — Save and search URLs → /bookmarks• weather-brief — Morning weather → runs automatically🚀 Deployed:• portfolio-tracker → https://portfolio-tracker-xi.vercel.appRefined voice, updated your project list, cleaned up 2 unused scripts.

This is the behavior I wanted from OpenClaw and could never quite get right with heartbeat callbacks and cron jobs. OBOL doesn't wait to be asked. It notices and acts

security by default

Most self-hosted AI agents treat security as an afterthought. OBOL treats it as a first-run requirement

When you run obol init, it hardens your server automatically — SSH moved to port 2222 with password auth disabled, UFW firewall configured, fail2ban installed and active, kernel parameters tightened via sysctl. You don't have to remember to do any of this

Credentials are a different story. Every API key, password, and token you give OBOL goes into an encrypted secret store backed by GPG (with a JSON fallback using restricted file permissions). They are never written to plaintext. Never logged. Never hardcoded into scripts. They are injected at runtime only when a script needs them

If you accidentally paste a credential into the chat, OBOL warns you immediately, tells you to revoke it, and directs you to /secret set — the safe channel

On the multi-user side, each person runs in a fully sandboxed workspace. Shell commands are blocked from escaping their directory. Sensitive paths (/etc, .ssh, .env) are permanently blocked. Destructive operations require explicit confirmation before running

Security isn't a section of the README. It's what the agent does when it first wakes up

two people deploy it, get two different bots

This is the part I find most interesting. OBOL starts as a blank slate. No default personality. No pre-built opinions. It becomes shaped by whoever talks to it

Deploy it for a crypto trader and it evolves into a market-aware assistant that builds dashboards and tracks portfolios. Deploy it for a writer and it becomes an editor that knows your voice and builds publishing workflows. Same codebase. Completely different agents after a month

The evolution/ directory keeps archived copies of every SOUL.md. You can literally read the timeline of how your bot went from "hello, I'm a new AI assistant" to something with actual personality. Every evolution is a git commit pair — before and after — so you can diff exactly what changed

After six months you have 12+ archived souls. It's like reading someone's journal

background work that doesn't block you

OBOL runs background tasks with 30-second check-ins. Heavy operations — research, deployments, analysis — happen asynchronously while the bot stays responsive to your messages. OpenClaw has sub-agents for this, which is great, but OBOL bakes it into the core loop instead of requiring you to architect it

what does it actually cost to run?

| Service | Cost | |---------|------| | VPS (DigitalOcean) | ~$6/mo | | Anthropic API | pay-as-you-go, or $0 on Claude Max | | Supabase | free tier | | GitHub | free | | Vercel | free tier | | Embeddings | free (local, CPU) |

Total: roughly $6-9/mo depending on how you handle the API. If you're already on Claude Max — the VPS is basically your only cost

The rolling context window, targeted memory retrieval, and prompt caching all work together to keep that API cost predictable. Evolution cycles run on Sonnet at ~$0.02 each. The Haiku router adds ~$0.0001 per message. There's no architecture decision in OBOL that doesn't have a cost reason behind it

try it

It's open source. MIT license. github.com/jestersimpps/obol

npm install -g obol-aiobol init # 6 inputs: telegram token, claude key, supabase url/keys, github tokenobol start # that's it

You need a VPS (it hardens it for you), a Telegram bot token, a Claude API key, and a Supabase project with pgvector. The init wizard walks you through all of it

I'm not saying OBOL replaces OpenClaw for everyone. OpenClaw is good infrastructure for building AI-powered chat interfaces. But I wanted something that goes further — something that doesn't just respond to instructions but develops its own understanding, fixes its own mistakes, and grows into an agent that's genuinely useful without constant hand-holding

I wanted an AI that remembers me. So I built one

Stay Updated

Get notified about new posts on automation, productivity tips, indie hacking, and web3.

No spam, ever. Unsubscribe anytime.