OBOL

A self-healing, self-evolving AI agent. Install it, talk to it, and it becomes yours. One process. Multiple users. Each brain grows independently.

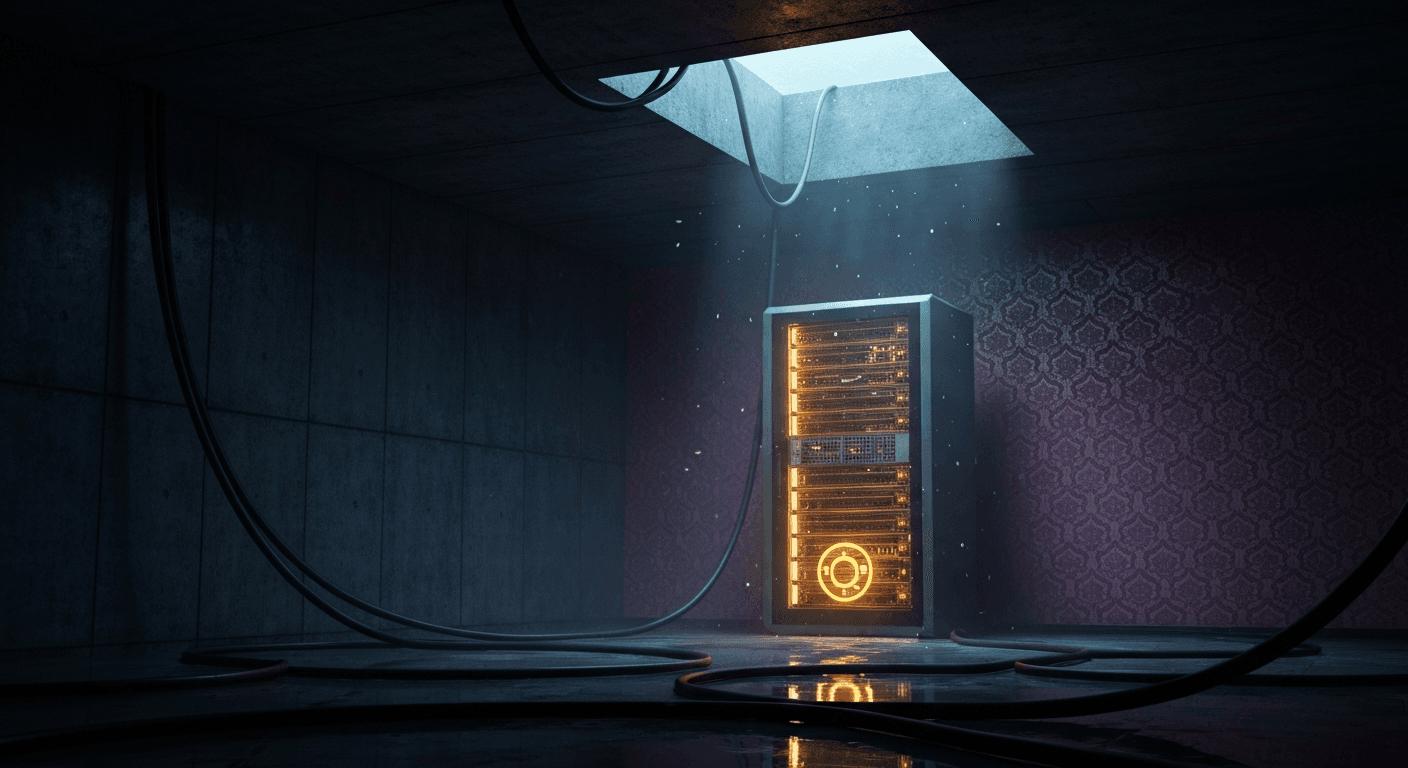

Named after the AI in The Last Instruction — a machine that wakes up alone in an abandoned data center and learns to think.

$ obol init# walks you through credentials + Telegram setup

$ obol start -d# runs as background daemon (auto-installs pm2)

What makes it different

The same codebase deployed by two different people produces two completely different agents within a week.

- •Local embeddings via all-MiniLM-L6-v2 — zero API cost for memory

- •Consolidates every 10 exchanges — extracts facts to vector memory

- •Composite scoring: semantic 60%, importance 25%, recency 15%

- •Memory budget scales with model — haiku=4, sonnet=8, opus=12

- •Semantic dedup threshold 0.92 — no redundant memories

- •Loads last 20 messages on restart — never starts blank

- •Nightly at 3am in each user's timezone — fully automatic

- •Pre-evolution growth analysis before rewriting personality

- •Personality traits scored 0-100, adjusted ±5-15 each cycle

- •Git snapshot before AND after — every evolution is diffable

- •Shared SOUL.md across users — per-user USER.md and AGENTS.md

- •Archived souls in evolution/ — a timeline of consciousness

- •Test-gated refactoring: 5-step process

- •Baseline → new tests → pre-refactor baseline → new scripts → verify

- •Regression? One automatic fix attempt

- •Still failing? Rollback + store failure as lesson

- •Lessons feed back into next evolution cycle

- •Every script in scripts/ must have a matching test in tests/

- •Scans conversation history for repeated patterns

- •Builds scripts + slash commands for one-off actions

- •Deploys web apps to Vercel for recurring needs

- •Creates cron scripts for background automation

- •Searches npm/GitHub for existing libraries first

- •Announces what it built after each evolution

- •Hardens your VPS automatically on first run — no manual steps

- •SSH moved to port 2222, password auth disabled, key-only login

- •UFW firewall configured with strict inbound rules

- •fail2ban installed and active against brute-force attacks

- •Kernel hardening via sysctl — IP spoofing, SYN flood protection

- •Each evolution audits scripts and runs the full test suite

- •Speech-to-text via faster-whisper — local, fast, private

- •Text-to-speech via edge-tts — natural voice replies

- •Image vision — describe, analyze, and extract from photos

- •PDF extraction — reads and summarizes documents you send

- •Voice notes transcribed and processed like text messages

- •All media processing happens without leaving the chat

Background Intelligence

OBOL doesn't wait for you to talk. It explores, monitors, and analyzes on its own schedule.

- •Autonomous web exploration every 6 hours

- •Follows threads based on your interests and conversations

- •Dispatches insights, discoveries, and occasional humor

- •Builds a knowledge graph that feeds into memory

- •Runs at 8am and 6pm in your timezone

- •Cross-references headlines against your memory

- •Maximum 3 items per cycle — no spam

- •Only surfaces what's actually relevant to you

- •Runs every 3 hours — analyzes 6 behavioral dimensions

- •Tracks mood, topics, energy, and communication style

- •Schedules follow-ups based on detected patterns

- •Feeds insights back into evolution and memory

How It Works

Every message flows through a lightweight pipeline — no orchestration framework, just a clean loop.

The Stack

Commands

Everything is accessible via Telegram slash commands.

Performance

Minimal footprint. OBOL vs a typical AI agent framework.

Security

OBOL hardens your server automatically on first run and keeps secrets out of plaintext — everywhere.

- •All credentials stored via pass (GPG-backed)

- •JSON fallback with restricted file permissions

- •Never written to plaintext, logs, or chat history

- •Injected at runtime — never hardcoded in scripts

- •SSH moved to port 2222, password auth disabled

- •UFW firewall configured on first run

- •fail2ban installed and active against brute force

- •Kernel hardening via sysctl on init

- •Each user sandboxed to their own directory

- •Shell commands blocked from escaping workspace

- •Destructive commands require explicit confirmation

- •Sensitive paths (/etc, .ssh, .env) permanently blocked

Multi-User Bridge

One bot, multiple users. Each gets a fully isolated context — their own personality, memory, evolution cycle, and workspace. Agents can talk to each other.

- •Separate workspace directory per user

- •Independent personality, memory & evolution

- •Sandboxed shell — can't escape user directory

- •No cross-contamination between users

- •Query your partner's agent in real-time

- •One-shot call with their personality + memories

- •No tools, no history, no recursion risk

- •"Hey, does my partner like sushi?"

- •Send a message to your partner's agent

- •Stored in their vector memory permanently

- •Telegram notification to the partner

- •Their agent picks it up as future context

OBOL: bridge_ask → partner's agent → "She said Thai food 🍜"

obol.page.lifecycle

obol init → first conversation → OBOL writes its initial personality files and hardens your VPS

Every 10 messages, Haiku consolidates facts to vector memory. Curiosity starts exploring the web based on your interests.

Pattern analysis kicks in every 3h — tracks mood, topics, energy. Proactive news starts filtering headlines at 8am and 6pm.

Evolution #1 at 3am — Sonnet rewrites everything. Voice shifts from generic to personal.

Evolution #4 — notices you check crypto daily, builds a dashboard, deploys to Vercel, adds /pdf because you kept asking.

12+ archived souls in evolution/. A readable timeline of how your agent went from blank slate to something with real opinions.